Deconstructing the Autonomy Blueprint

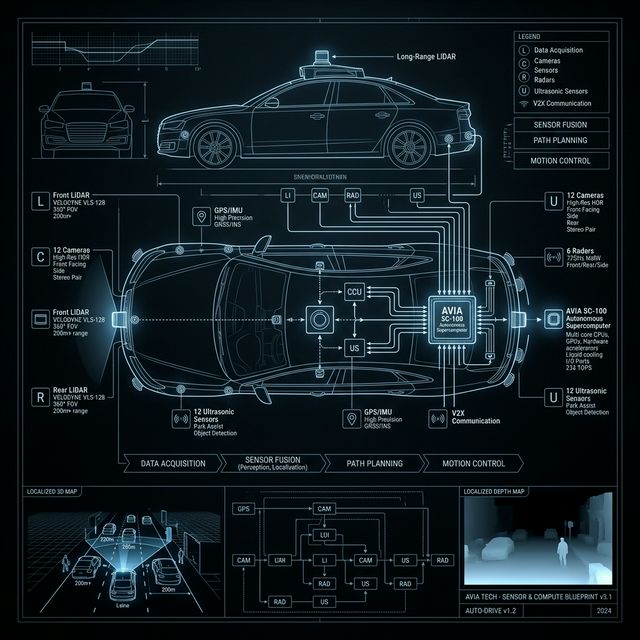

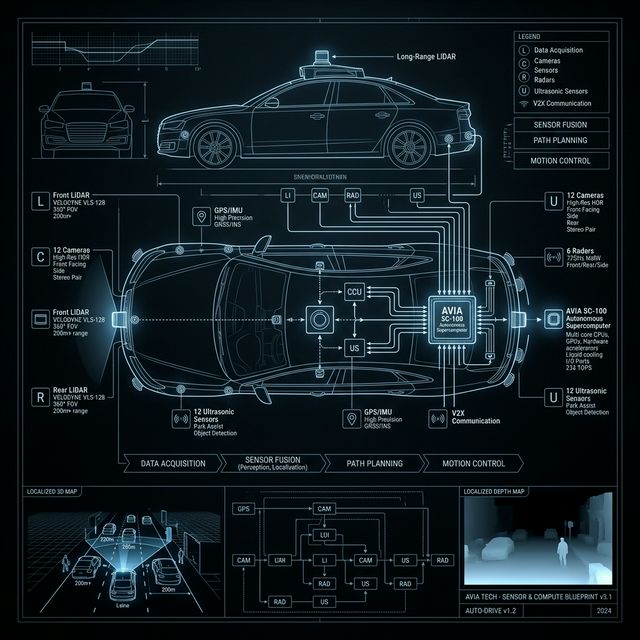

NVIDIA’s offering is broken down into a few distinct, interconnected layers, allowing automakers to deploy Level 4 autonomy years faster than if they had built the tech themselves.

Halos OS

This is the underlying operating system that acts as an unshakeable guardrail.

System Override Protocol

It runs continuous safety checks from the cloud to the car to ensure the AI's decisions never violate core safety standards (like crashing or running a red light).

Omniverse & Cosmos

Before a car ever hits real asphalt, it is trained in the matrix. NVIDIA uses its Omniverse simulation platform to create physics-accurate "digital twins" of the real world.

"Automakers can generate synthetic data to train the AI on rare edge cases (e.g., a pedestrian dropping a mattress on a highway in a blizzard) without having to wait for it to happen in real life."

Case Study: Isuzu Autonomous Bus

1. Bolting in the Hardware

Isuzu takes the Hyperion blueprint, installs the 14 cameras, lidars, and radars onto the bus frame, and drops the Thor supercomputer under the hood.

2. Virtual Miles

They boot up Omniverse, create Tokyo's streets, and run millions of synthetically generated weather / traffic anomalies to teach the bus.

3. Loading the Brain

Alpamayo is loaded onto the bus. Live camera feeds allow the bus to recognize complex scenes (like swerving cyclists) and avoid them.

4. Deploying Safely

Halos OS constantly monitors calculations, overriding any unsafe decisions with forced stops.

By leveraging this open framework, Isuzu deploys a fully autonomous Level 4 bus years faster and vastly cheaper than inventing the wheel from scratch.

Return to Research